Now Reading: The “Small Eye” Glitch: When AI Bias Meets the Driver’s Seat

-

01

The “Small Eye” Glitch: When AI Bias Meets the Driver’s Seat

The “Small Eye” Glitch: When AI Bias Meets the Driver’s Seat

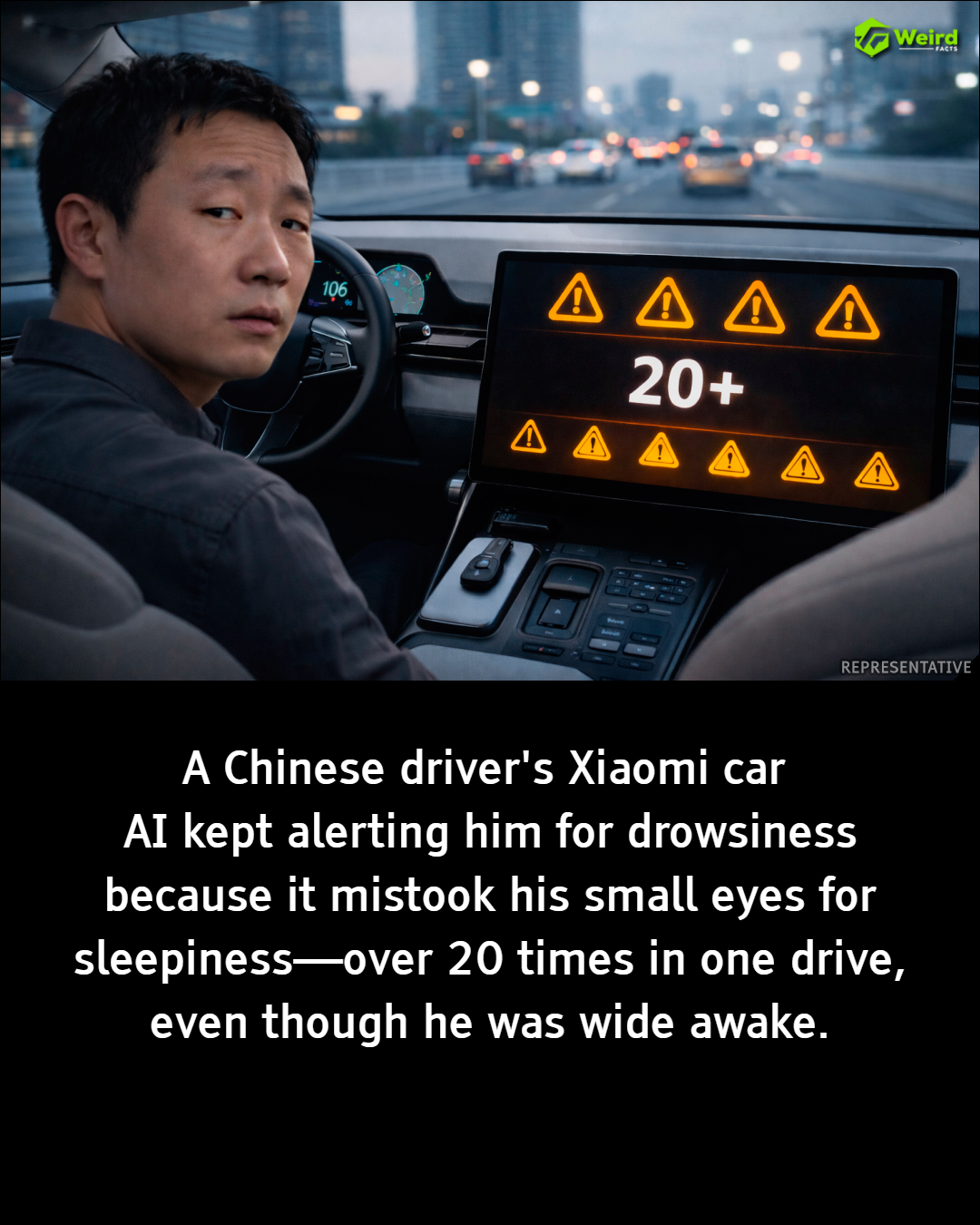

In June 2025, a routine drive in Zhejiang, China, turned into a viral masterclass on the unintended consequences of modern technology. Mr. Li, a driver of the high-performance Xiaomi SU7 Max, found himself locked in a frustrating battle of wills—not with traffic, but with his car’s artificial intelligence.

The Conflict: Alertness vs. Algorithms

The Xiaomi SU7 Max is equipped with a sophisticated driver-monitoring system (DMS). Using interior cameras and infrared sensors, the AI is designed to track eye movements and eyelid “openness” to detect signs of fatigue or distraction. If the system determines a driver is falling asleep, it triggers audible and visual warnings to ensure safety.

However, the system encountered a variable it wasn’t quite prepared for: Mr. Li’s natural facial features. Because Mr. Li has naturally small eyes, the AI misinterpreted his standard appearance as a sign of drooping eyelids.

During a single trip, the vehicle issued over 20 false alerts, repeatedly chiming “Please focus on driving.” Despite being fully caffeinated and wide awake, Mr. Li was forced to exaggeratedly widen his eyes just to satisfy the algorithm and silence the alarms. The irony was palpable: the system meant to reduce driver distraction had become the primary source of it.

A Viral Catalyst for Debate

The incident was captured on video by Mr. Li’s sister and quickly exploded across Chinese social media platforms like Weibo. While many viewers found humor in the absurdity of the situation, the footage sparked a serious conversation regarding AI bias and the lack of diversity in biometric training data.

The core of the issue lies in “over-optimization.” If an AI is trained primarily on a dataset with specific eyelid proportions, it may struggle to recognize the nuances of diverse human features. This isn’t just a minor inconvenience; it is a technical oversight that can lead to:

-

Safety Fatigue: Drivers may begin to ignore real warnings if false alerts are constant.

-

User Frustration: A premium driving experience is degraded by “nagging” software.

-

Inclusivity Gaps: Technology failing to account for various ethnicities or physical traits.

Moving Forward

Xiaomi has since acknowledged the feedback, highlighting the need for continuous software refinement. This event serves as a reminder that as we move toward a world of autonomous and AI-assisted travel, the “human element” is more diverse than a standard line of code might suggest. For AI to truly keep us safe, it first needs to see us for who we actually are.